The problem

Most dynamic control tools expose loads of parameters—thresholds, ratios, attack times, saturation curves—but producers don’t think in those terms.

They think in outcomes:

- smoother, stable, squashed

- more forward

- bouncy, lively

- softer, less harsh

We believed there was an opportunity to replace algorithm-framed control with something that matched how people describe what they want to hear.

The bet

We started with the idea that a vocal could be controlled through a small number of perceptual goals rather than a large number of technical parameters.

Very early on, I sketched and prototyped an XY-style interface that let users move through a space of outcomes. Things like softer, more stable, or more focused, even though we didn’t yet have the signal processing to back it up.

That interface wasn’t just decoration, it was a commitment: if we were going to ask users to think this way, the sound engine had to make it true.

Forcing the DSP to live up to the interface

Once that mental model was in place, I built and iterated on five independent signal-processing modules in Max/MSP Gen:

- automatic gain staging

- two novel compressor topologies

- level-independent dynamic tone control

- variable-tone saturation

Each prototype was a different attempt to answer part of same question: what algorithms would have to exist for this simple XY control to feel honest?

Some approaches sounded impressive but didn’t track user intent. Others mapped cleanly to how producers described what they wanted.

By listening to these with users, we could tell which ideas supported the interface and which undermined it. Only then did we converge on the final architecture.

Mapping intent to algorithms

The XY system now drives multiple DSP modules together behind the scenes. Moving a control doesn’t “turn up compression”, it moves the sound towards a perceptual outcome, coordinating multiple changes behind single gestures.

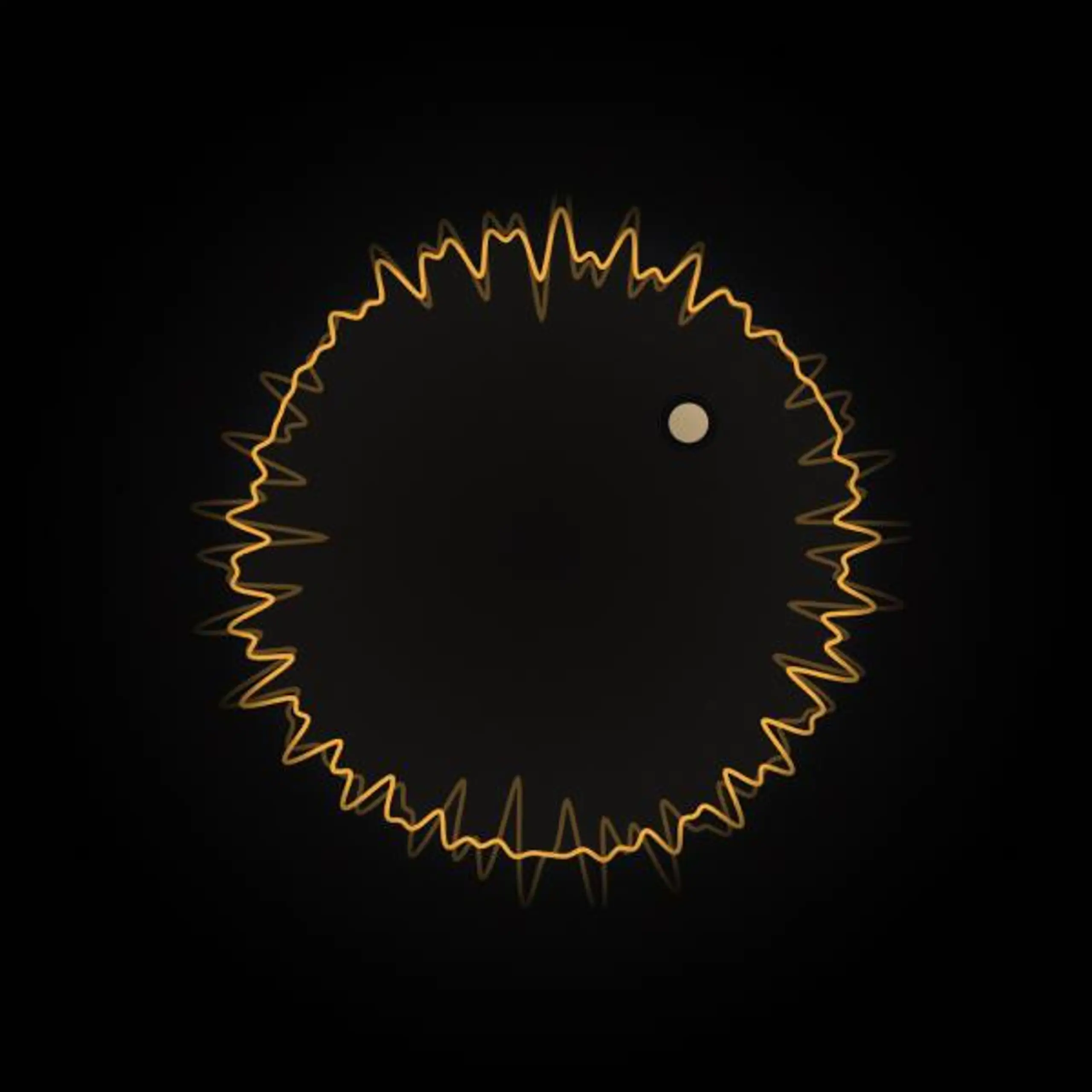

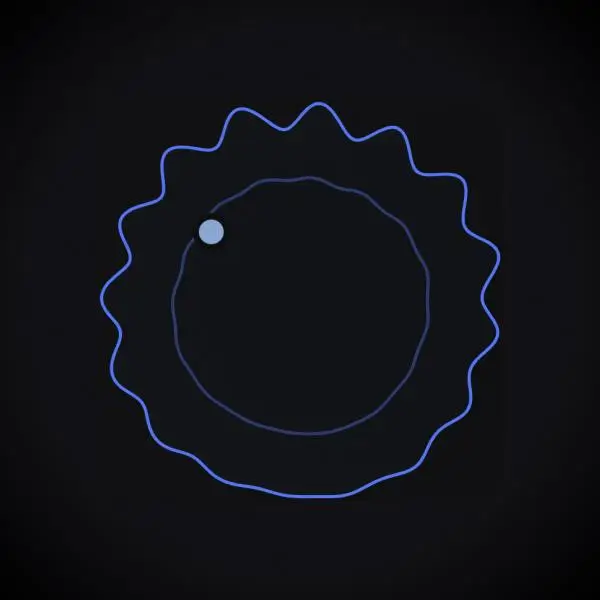

To make this intelligible, I built interactive visual feedback that showed how the sound was changing as users explored the space.

Making a new mental model feel safe

Replacing familiar compressor controls is risky. Users need to trust that the system is doing something sensible.

We used automatic gain staging and carefully tuned visual feedback so users could explore without being misled by loudness or hidden changes. This let people judge changes by how they sound and feel, not by whether the controls are set to “sensible” values.

Impact

Voca validated an approach to intelligent processing that prioritises user agency over prescriptive automation, broadening the brand’s accessibility without eroding professional trust.

Tim Lloyd

Tim Lloyd