The problem

ML models can look impressive in demos and still fail in production.

Early in Drum Gate’s development we had no reliable way to tell whether a new model was actually better, or just different. Subsequent training runs even with the same training, validation, and test splits showed variation across all metrics.

Without a way to measure progress more reliably, we couldn’t tell whether data augmentation was really helping, or whether model simplifications were actually hurting.

Making model quality measurable

To solve this, I designed a statistical validation framework. Instead of comparing metrics across single models, we ran each training many times for a given configuration, and compared metrics statistically.

The result was a system that could answer questions like:

- Is this model actually better, or just better on this one metric or this particular test split?

- How confident are we that improvements are not just overfitting?

- Are we improving the failure cases that matter most?

This turned ML iteration from guesswork into something we could manage like any other engineering problem.

Making ML usable in real time

High-quality neural networks are usually too slow and too large to run inside a real-time audio plugin.

Working with a DSP engineer, I defined and drove the work needed to make Drum Gate’s models practical in real time:

- Quantising our models to reduce latency and inference time variability

- Integrating models via a multithreaded ONNX runtime

- Validating that performance was predictable enough for professional sessions

This ensured the model behaviour was stable and predictable, even in large mixes.

Preserving what users actually like about drums

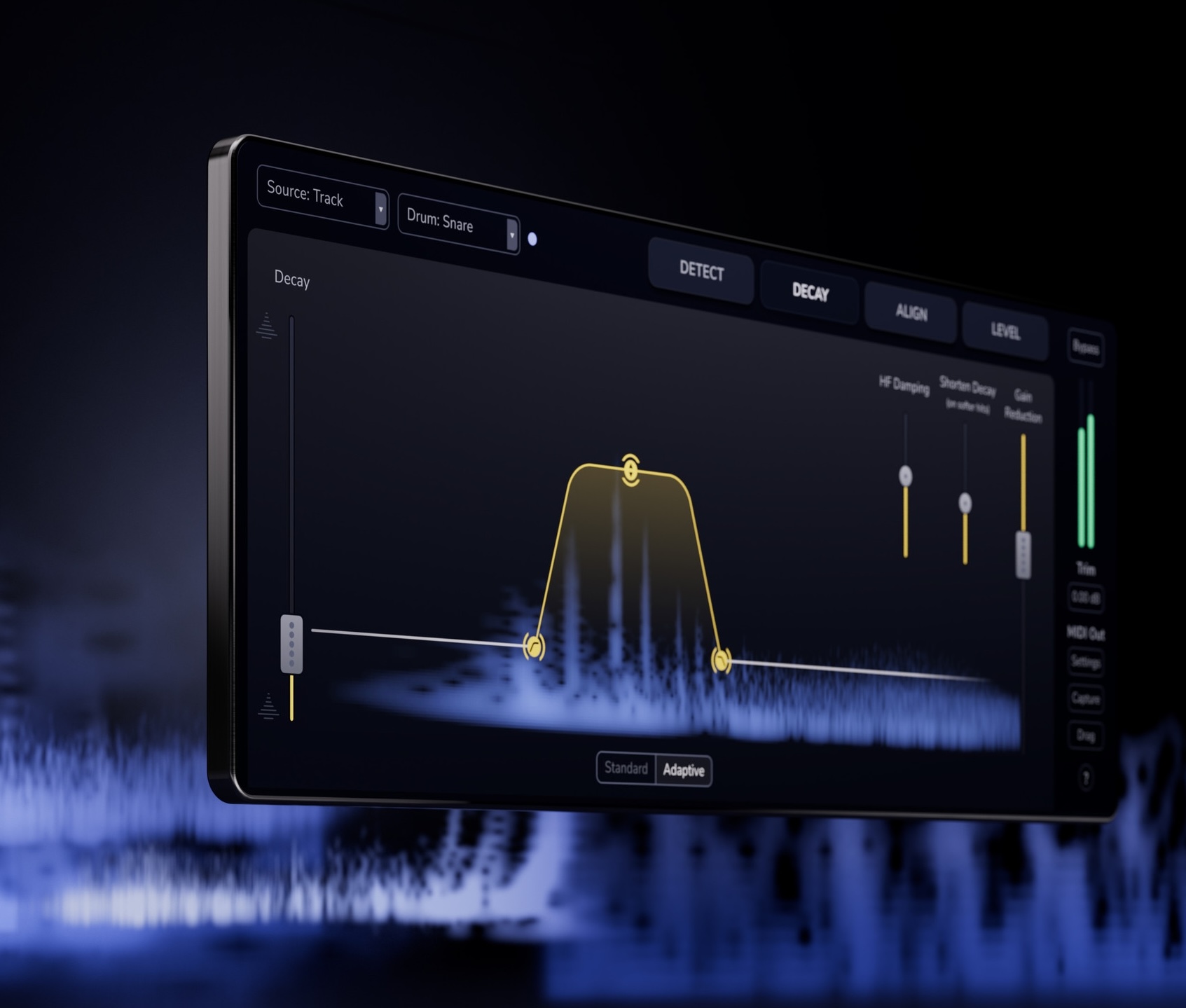

A pure ML gate could remove spill, but it also tended to kill the natural decay that gives drums their character. Current state of the art source separation can help, but leaves behind unnatural audible artifacts.

To solve this, we created a novel spectral decay algorithm that let resonances ring naturally while still removing unwanted bleed. This gives the sonic benefits of source separation without the downsides.

Making the results intelligible

Early versions of the UI showed two competing graphs (one with history over time, the other instantaneous) which made it hard for users to understand what the system was doing and which set of controls to adjust to get the results they wanted.

We simplified this to a single confidence-over-time display that answered the question which matters most often: Does the system think this is a drum hit right now?

This made the behaviour of the ML model legible without exposing more detailed controls before they were needed.

Impact

Drum Gate 2 improved the core experience of tidying up acoustic drum recordings while introducing new powerful features built on top of the machine learning drum classification.

Tim Lloyd

Tim Lloyd